March 24, 2026

In the deployment of AI voice agents in healthcare, latency is not just a technical issue—it is a trust issue.

Across multiple discussions in technical communities and forums like Reddit, users report a consistent experience: awkward pauses, delayed responses, and conversations that feel “unnatural.” In a healthcare environment—where precision, empathy, and clarity are critical—these issues directly impact:

- Patient trust

- Fluency in processes such as scheduling

- Perceived service quality

This article breaks down what latency really is in AI voice agents, why it happens, and the technical best practices to minimize it in clinical environments.

What is latency in AI voice agents?

Latency in an AI-powered call is the total time between when a user speaks and when they receive an audible response from the system.

This time is composed of multiple layers:

- Speech-to-Text (STT): converting speech into text

- LLM processing: interpretation + response generation

- Orchestration (business logic): validations, queries to clinical systems, scheduling

- Text-to-Speech (TTS): converting text into audio

- Network / telecommunications: audio transmission

Even small delays in each layer accumulate, creating a fragmented experience.

Why latency directly impacts patient trust

In healthcare, conversation is not just functional—it is emotional.

High latency creates:

- The feeling that the system “doesn’t understand”

- Interruptions during critical moments (symptoms, urgency)

- Perception of low technological quality

- Distrust in how sensitive information is handled

Key insight:

Users don’t measure milliseconds. They measure fluency.

If a conversation doesn’t flow, patients assume the system is unreliable—even if it is technically accurate.

Latency and its effect on medical scheduling

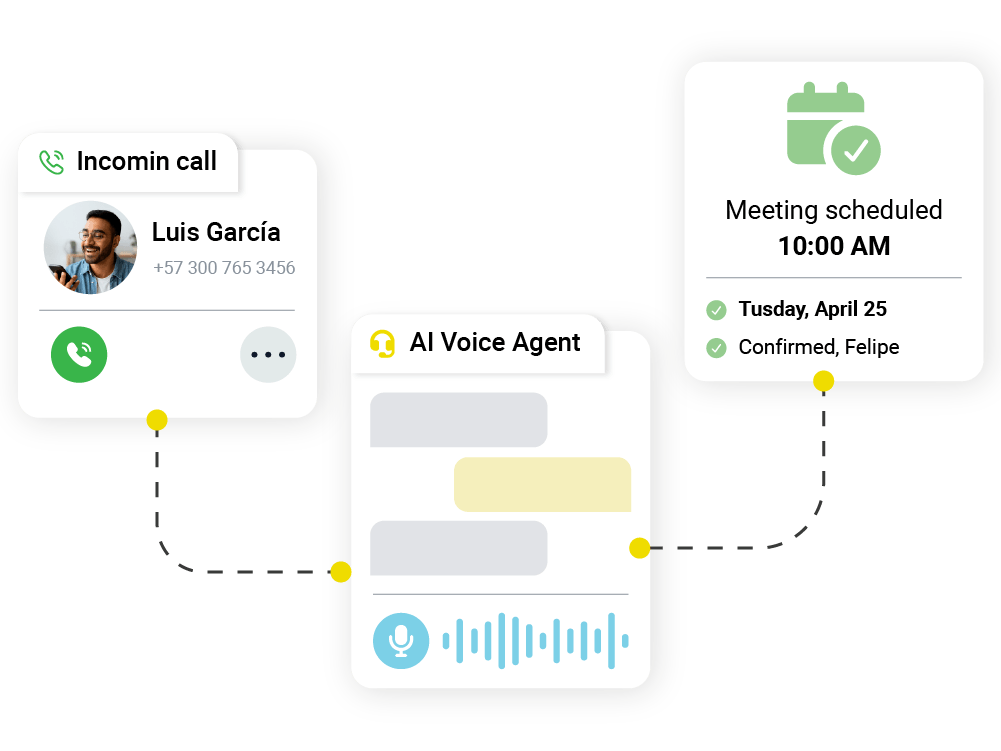

One of the primary use cases for voice agents in healthcare is scheduling.

Here, latency directly impacts:

- Call abandonment: long pauses reduce completion rates

- Data capture errors: users repeat information or get confused

- Call duration: higher operational costs

- Conversion: lower appointment confirmation rates

A smooth interaction can significantly reduce call time and improve operational efficiency.

Technical components where latency occurs in AI voice agents

To optimize, you first need to understand where it happens.

1. Speech-to-Text (STT) models

- Latency depends on:

- Model size

- Batch vs streaming processing

- Model size

- Common issue: waiting for the user to fully finish speaking

Best practice: use real-time STT (streaming partial transcripts)

2. LLM inference

- This is the most time-consuming component

- Key factors:

- Model size

- Context length

- Prompt complexity

- Model size

Common problem: oversized prompts with too much embedded logic

3. Backend integrations

- Queries to:

- Scheduling systems

- EHR/EMR systems

- Insurance validation services

- Scheduling systems

Risk: slow APIs blocking responses

4. Text-to-Speech (TTS)

- More natural models tend to be slower

- Full generation vs streaming

5. Agent orchestration

- Turn-taking management

- Decision on when to respond

Technical best practices to reduce latency

1. Implement end-to-end streaming architecture

Instead of waiting for each component to finish:

- STT → send partial transcripts

- LLM → generate incremental responses

- TTS → play audio while it is being generated

Result: drastic reduction in perceived wait time

2. Design optimized and modular prompts

- Reduce unnecessary tokens

- Separate logic into layers (not everything in the prompt)

- Use clear and concise instructions

Rule of thumb: less context = lower latency

3. Use hybrid model strategies

Not everything requires a large LLM.

- Classification → small models

- Structured responses → templates

- LLM only for complex cases

This significantly reduces inference time.

4. Smart response caching

Common healthcare cases:

- Hours of operation

- Locations

- FAQs

Preprocessing and caching reduce model calls.

5. Integration optimization

- Use asynchronous APIs

- Pre-fetch relevant data

- Controlled timeouts

Example: load availability before the user explicitly requests it.

6. Conversational turn-taking control

One of the biggest issues in latency perception:

- The agent responds too late

- Or interrupts the user

Solution:

- Detect natural pauses (endpointing)

- Adjust silence sensitivity

- Allow “barge-in” (user interruptions)

7. Infrastructure close to the user (edge / region)

- Reduce network latency

- Deploy services in regions close to the patient

Especially relevant in distributed healthcare systems.

8. Real-time latency monitoring

You can’t optimize what you don’t measure.

Key metrics:

- Total response time

- Time per component (STT, LLM, TTS)

- Abandonment rate

- Average call duration

Latency vs fluency: the real KPI

Reducing milliseconds is not enough.

The real goal is:

Maintaining a natural, continuous, and trustworthy conversation

This implies:

- Timely responses

- Human-like conversational pacing

- Ability to sustain long contexts without degradation

Perceived fluency is the true success metric.

Can AI agents sustain long conversations in healthcare?

Yes—but under certain technical conditions:

- Efficient context management (windowing, selective memory)

- Dynamic summarization of long conversations

- Separation between active and historical memory

The limitation is not the model’s capability, but the architecture supporting it.

Conclusion: latency as a competitive advantage

In healthcare, where patient experience is critical, latency shifts from being a technical issue to a strategic differentiator.

Organizations that invest in optimizing it achieve:

- Greater patient trust

- Better operational efficiency

- Higher conversion rates in key processes such as scheduling

About Rootlenses Voice

Rootlenses Voice is an AI voice agent designed to automate calls in complex industries like healthcare, combining:

- Architectures optimized for low latency

- Smooth and natural conversations

- Secure integration with clinical systems

- Real-time monitoring and analytics

The result: experiences that not only work, but build trust in every interaction.